Why Observability Is an Engineering Skill, Not a Tool Choice

Feb 01, 2026 • 10 min read • Backend, Observability, Engineering, Production, Reliability

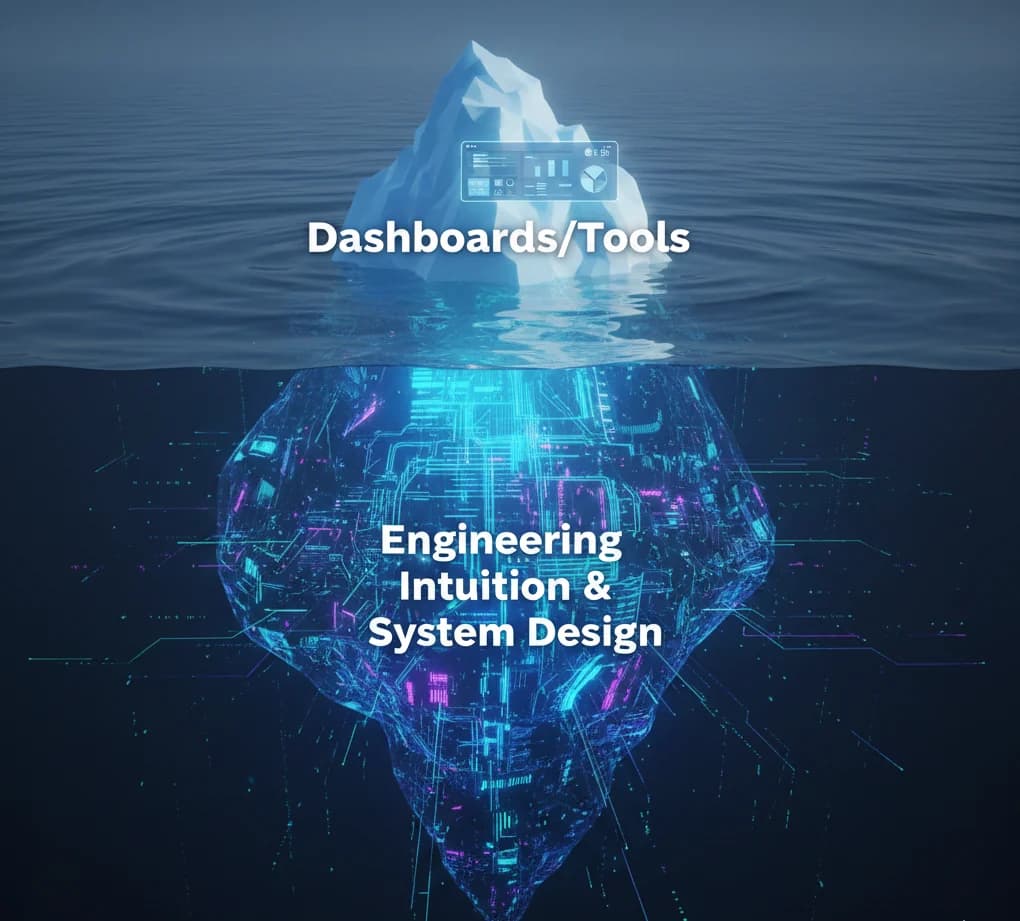

Observability isn’t something you buy or plug in. It’s a way of thinking about systems that reflects how engineers design, reason, and take ownership.

When teams talk about observability, the conversation usually starts with tools.

- Which logging platform to use?

- Which metrics system is better?

- Which tracing vendor scales best?

- Should we host our own observability stack or use a SaaS?

Those choices matter - but they miss the point. After working on production systems long enough, one thing becomes clear: Observability is not a tool choice. It’s an engineering skill. And like most skills, it shows up in design decisions long before any tool is configured.

1. Tools Amplify Thinking; They Don't Replace It

It’s easy to believe that adding a world-class observability stack will magically make a system understandable. In reality, tools only expose what the system already makes visible. If your system hides intent, obscures control flow, or fails silently, no amount of expensive dashboards will fix that.

Good observability starts inside the code. It is the practice of 'leaving breadcrumbs' so that a future version of you (stressed and tired) can reconstruct the truth. Two teams can use the same version of SigNoz or Datadog, and one will find a bug in minutes while the other spends hours. The difference isn't the tool; it's the instrumentation.

Aspect | Observability as a Tool | Observability as a Skill |

|---|---|---|

Goal | Collecting data (CPU, RAM, Logs) | Answering questions about behavior |

Process | Installing agents and SDKs | Designing explicit state transitions |

Outcome | Beautiful, but often noisy, charts | Reduced uncertainty during incidents |

2. The Anatomy of a 'Quiet' Failure

Standard metrics like CPU and Memory are 'loud' signals. They scream when something is catastrophically wrong. But the most dangerous bugs in production are 'quiet'. They are the logical inconsistencies where the system returns a 200 OK, but the data is wrong.

Observability as a skill means identifying these silent failure modes and creating 'Domain-Specific Probes'.

Instead of just measuring 'Request Count', you measure 'Orders Placed vs. Successful Payments'. If that ratio drifts, you have a signal that standard infrastructure metrics would never catch.

3. Thinking in Spans: Designing for Traceability

Tracing is the ultimate test of how well an engineer understands their system's flow. It works best when boundaries are explicit and async work is intentional. If you find it hard to instrument a trace, it’s usually a signal that your system’s lifecycle is too coupled or poorly understood.

Highly observable systems don't just 'pass' a TraceID; they enrich it at every step with business context.

// ❌ RECOVERY-HOSTILE CODE

async function handleOrder(order) {

await validate(order);

await save(order);

await notify(order); // If this fails, which order was it? Why did it trigger?

}

// ✅ OBSERVABILITY-FIRST CODE (Thinking in Spans)

async function handleOrder(order) {

return tracer.startActiveSpan('handleOrder', { attributes: { 'order.id': order.id } }, async (span) => {

try {

const user = await validate(order);

// Enriching the span with business context makes it searchable

span.setAttribute('user.id', user.id);

span.setAttribute('order.total', order.amount);

await save(order);

logger.info("Order persisted successfully", {

orderId: order.id,

userId: user.id,

latency_ms: span.duration

});

await notify(order);

} catch (err) {

span.recordException(err);

span.setStatus({ code: SpanStatusCode.ERROR, message: err.message });

throw err;

} finally {

span.end();

}

});

}4. Socio-Technical Observability: Communication via Data

In a growing organization, observability is the primary language between teams. When the Frontend team sees a 500 Internal Server Error, can they look at a trace and tell exactly which Backend service failed without messaging you? If they can't, your observability isn't working.

A skilled engineer writes logs and spans not just for themselves, but as a public API for other engineers to consume. This reduces the Slack-driven debugging cycle and allows teams to move independently.

5. The Cardinality Secret: Why Your Metrics Lie

A key skill in observability is understanding Cardinality - the number of unique values in a dataset.

Traditional metrics are low-cardinality; they tell you the average CPU across a cluster. But a Senior Engineer knows that averages hide the truth.

High-cardinality observability allows you to look at a single UserID, a specific ContainerID, or a unique TransactionID across millions of requests. This is where the 'needle in the haystack' is found. If you don't design your system to emit high-cardinality metadata, you are effectively looking at your production environment through a blurry lens.

The Power of Dimensions

A metric like

request_latency is a single dimension.

A metric like request_latency {region="us-east-1", customer_tier="enterprise", version="v2.1.0"} is multi-dimensional.

The latter allows you to prove that an incident is only affecting Enterprise users on a specific version - saving you from a blind global rollback.6. The Observer’s Checklist for PR Reviews

When reviewing code, stop looking only for logic. Start looking for visibility. Ask these four questions before approving any change:

- The Correlation Test: If this function fails, can I find the exact log line using only the

TraceIDfrom the error response? - The Cardinality Test: Are we logging high-cardinality data (like UUIDs) correctly so they are searchable but don't blow up our storage costs?

- The Silent Failure Test: Are we catching an error and just 'logging it' without incrementing a failure metric? If so, we are creating a blind spot.

- The Human Readability Test: If I’m paged at 3 AM, will this log message tell me what to do, or just that 'something happened'?

Final Thoughts: A Shift in Identity

Observability isn’t about which tool you use. It’s about whether your system can answer hard questions under pressure. It’s about moving from an Implementer - who just writes code - to an Engineer - who takes responsibility for how that code behaves in the wild.

The best systems in the world weren't built with the most expensive tools; they were built by engineers who refused to be blind to their own creations. Build for visibility today, so you don't have to build for recovery tomorrow.

Thanks for reading.

Written by Sanket Dofe

Full-stack engineer & system architect. I build scalable products and write about engineering clarity.

Your Take?

How did this piece land for you? React or drop your thoughts below.

Join the Conversation

Keep Reading

Recommended articles & engineering write-ups.

Why Debuggability Is a Feature, Not a Nice-to-Have

Jan 25, 2026 • 8 min • Backend, Debugging, Reliability, Production, Engineering

Debuggability determines how fast teams recover when things break. In real systems, it’s not optional—it’s a core product feature.

The Day Production Taught Me What Ownership Really Means

Jan 18, 2026 • 6 min • Engineering, Ownership, Backend, Production, Career

Ownership in engineering isn’t a title or a responsibility on paper. It’s a mindset that production forces on you - usually when something breaks.

When Clean Architecture Breaks Down in Real Products

Jan 11, 2026 • 7 min • Architecture, System Design, Clean Architecture, Engineering

An exhaustive analysis of why strict architectural patterns struggle under production pressure, performance requirements, and the need for team velocity.

Building Reliable Systems on Top of Unreliable APIs

Jan 04, 2026 • 6 min • Backend, Integrations, APIs, Reliability, SaaS

Most production systems depend on APIs they don’t control. This is what it actually takes to build reliability when your dependencies are unpredictable.